Releasing new software can be a complex and lengthy process. Rapid feedback on whether your latest software build was successful is critical for quickly fixing defects, as the later defects are discovered in the product life cycle, the more costly and difficult the fix.

Azure DevOps is an integrated set of software project management services and tools that can be consumed as a fully managed cloud development offering called Azure DevOps Services (formerly Visual Studio Team Services (VSTS)) or as an on premise development offering called Azure DevOps Server (formerly Microsoft’s Team Foundation Server (TFS) (learn more about what is Azure DevOps).

In either format, Azure DevOps automates and streamlines the software build process. However, for larger products, the Azure DevOps feedback loop can be too long. This post shows how combining the automation capabilities of the Azure DevOps build process with Incredibuild’s unique process virtualization capabilities speeds up your compilation time by allowing your Azure DevOps build machine to utilize all of the cores in all of the machines across your network or public cloud, seamlessly transforming it to a super computer with hundreds of cores and gigs of memory.

About the Azure DevOps Build Process and Its Current Limits

Azure DevOps is a suite of tools that addresses the entirety of a development team’s needs—starting from requirement gathering and work management; continuing through software development, version management, and unit and acceptance testing; all the way to software packaging, building, and deployment. Azure DevOps has native integration with Microsoft’s own integrated development environment (IDE) called Visual Studio, and is geared toward building software written in Microsoft-supported software languages under its default settings; but Azure DevOps also fully integrates with other IDEs and can build products in virtually any software language.

The Azure DevOps build process is managed by triggering a pipeline consisting of one or more stages, which, in turn, organize jobs in a pipeline. Each job runs on one agent, which can be Microsoft-hosted (i.e., fully managed maintenance and upgrades) or self-hosted. Jobs comprise multiple steps and can be run directly on the agent’s host machine or in a container. Steps, i.e., a pre-packaged task or script, are the smallest building blocks in the pipeline, which is stored as a human-readable YAML (YAML Ain’t Markup Language) file.

The Azure DevOps agent will typically use a single CPU core for one job, with a majority of the job executed sequentially. Because very few parts of the pipeline run in parallel to each other, improving performance requires increasing the speed of the agent’s CPU for CPU-bound computational processes and of its IO capabilities for IO-bound processes, such as reading and writing to the file-system or the network.

However, today’s multi-core CPUs achieve faster performance only if operations are run in parallel. While it’s true that the dual-core CPU can run twice as many operations as its single-core counterpart, it must run them in parallel, two at a time, in order to achieve the goal of doubling the speed. As software developers, therefore, we must seek to break down and parallelize as many of our CPU-bound operations as we can to maximize the speed of our processes.

Tapping into More Resources

In this section, we’ll look at the different ways in which the Azure DevOps build process can be improved to make better use of multicore CPUs. We’ll examine the benefits, costs, and limitations of each method, with a focus on CPU-bound operations.

Commonly Used Parallelization Options for CPU-Bound Activities

While certain software development frameworks may make parallelization easier or even all but invisible to developers, generally speaking, some effort must be made to parallelize operations. The most common solutions involve setting up a cluster (also called a farm) made up of multiple machines or setting up a many-core machine—a High-Performance Computer.

Clustering

Clustering is the use of multiple machines for the same purpose. AzureDevops uses clustering to govern the build process, where the pipeline allocates an agent for each build request it receives. In essence, the Azure DevOps pipeline parallelizes at the level of the build-process job, running one complete job on each agent so that multiple build requests may be handled in parallel.

Theoretically, if an infinite stream of build requests are triggered and the agents are fully utilized, you will have completed as many AzureDevOps jobs as you have agents in the time it takes to run a single build. While this fully utilizes the build system, it would be far better to allocate as many resources as possible to complete a single build; when it’s done, as many resources as possible will again be allocated to running the next build, and so on.

For simpler projects, this optimization could be achieved by breaking down the build jobs into multiple jobs (compiling, running each test, etc.) and have the original job send a new build request for each sub-job. However, this approach incurs very high overhead in terms of synchronizing the environments and the outputs of each sub-job. Also, because it requires setting up the necessary software on each participating agent (if self-hosted), the parallelization efforts will be constrained by the number of machines that are dedicated to the build process.

Given the complexity and expense of “manual” parallelization efforts, it is not a viable solution.

High-Performance Computing

One alternative to governing many single-core agents is to have one multicore agent. This is where High-Performance Computing (HPC) comes in. HPCs (High-Performance Computers) are essentially computers (physical or virtual) that can aggregate computing power in a way that delivers a much higher performance than that of an individual desktop computer or workstation. HPCs make use of many CPU cores in order to accomplish this. Windows-based machines can support up to 256 cores.

The build process can be easily parallelized if run on a multicore agent. If, for example, you parallelize the software projects (collections ) that you need to run, you can compile as many collections as you have (up to the limit of the available cores) in parallel (each collection can in turn compile many units in parallel). Similarly, unit tests are (or should be) designed to be independent and thus easily parallelizable. Other tests can often be parallelized as well.

However, while the multicore HPC approach can increase overall performance, it has two inherent limitations: capacity and granularity. A 64-bit Windows-based system is (currently) limited to 256 cores, which is an insurmountable constraint for complex, multi-platform software projects.

The second factor is granularity. Without expending a great amount of effort into modifying the way your software is compiled, the project-collection (or top-level project) is atomic, and building it cannot be distributed across multiple build agents. .

There is, of course, a third factor: price. HPCs are extremely expensive and hardly the selection of choice to accommodate all build-related processes.

Going Beyond the Limits of Clusters and HPCs

Is there a way to parallelize the build process without expending an exorbitant amount of effort or money by either breaking down the process into discrete workflows or buying a less expensive machine? Is there a way we can go beyond the granularity limits of the workflow or solution?

The answer is yes. Incredibuild lets you parallelize individual OS processes and seamlessly delegate their execution across a cluster of hosts while seamlessly virtualizing the build agent environment on the remote machines.

Incredibuild Transforms Your Azure DevOps Agent into a 500-Core High-Performance Machine

With Incredibuild’s unique Virtualized Distributed Computing solution, you can easily achieve dramatically faster builds. Incredibuild transforms any Azure DevOps build machine into a virtual supercomputer by allowing it to harness idle CPU cycles from remote machines across the network or public cloud even while they’re in use. There are no changes to source code and absolutely no additional hardware required.

Incredibuild can be incorporated into your existing Azure DevOps build pipeline and accelerate your build times by allowing your build system of choice (such as MSBuild) to run hundreds of compilation tasks in parallel. When activated, Incredibuild intercepts the build system creation of compilation processes and detours the process execution to run remotely on another machine. Incredibuild’s unique virtualization technology emulates the build agent environment on-demand on the remote host, allowing the remote compilation process to execute on the remote host as if it’s running on the build agent, sending the compilation results and every other output back to the agent.

It’s important to note that nothing needs to be installed or copied to the remote machines; Incredibuild emulates everything that will be needed by the remote compilation process on-the-fly (including the dev stack, DLLs, executables, source files, etc.).

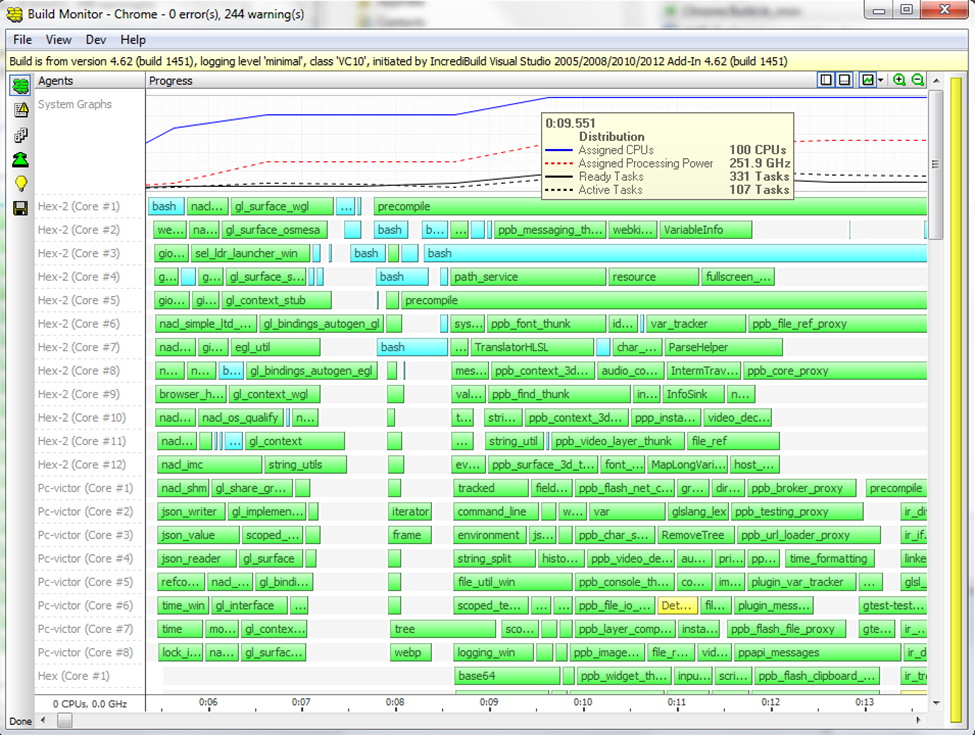

In Figure 1 (above), you can see all the cores participating in the build acceleration (100 cores in this scenario). Every horizontal bar in the build monitor represents a task being executed.

Minitib is another fine example of utilizing Incredibuild Cloud in conjunction with Azure DevOps build to speed up the build process. Minitab, a powerful statistical software, was in need of a solution to speed up build time, while working on the cloud (they shifted their CI to the cloud). Using both Incredibuild cloud and Azure DevOps proved to be the right combination of power and speed, as Tony explains: “Incredibuild running on 100 machines proved to be very effective in supporting Minitab’s development optimization goals.”

That’s just one example out of many. In fact, almost any workload that can execute multiple processes in parallel can be accelerated by Incredibuild in that way, whether activated by the Azure DevOps agent or by a developer’s workstation! This means that effectively, Incredibuild transforms any machine into a 500+ core host, allowing time-consuming workloads to run much faster using idle resources you already own across your network or by using on-demand public cloud resources.

Go Big or Go Home

Organizations today can’t afford to be left behind. Speed, agile, fast – these are not just words, These are values to live (or die) by. Each step of the process is examined and optimized to the maximum, as it should. That’s why our customers from leading companies in their industry don’t compromise on speed or quality – they want to have both and they want it now (or yesterday). Looking at the build process is a good place to start. A slow build process is bad business, especially if it can be sped up without affecting the quality or wasting too many resources.